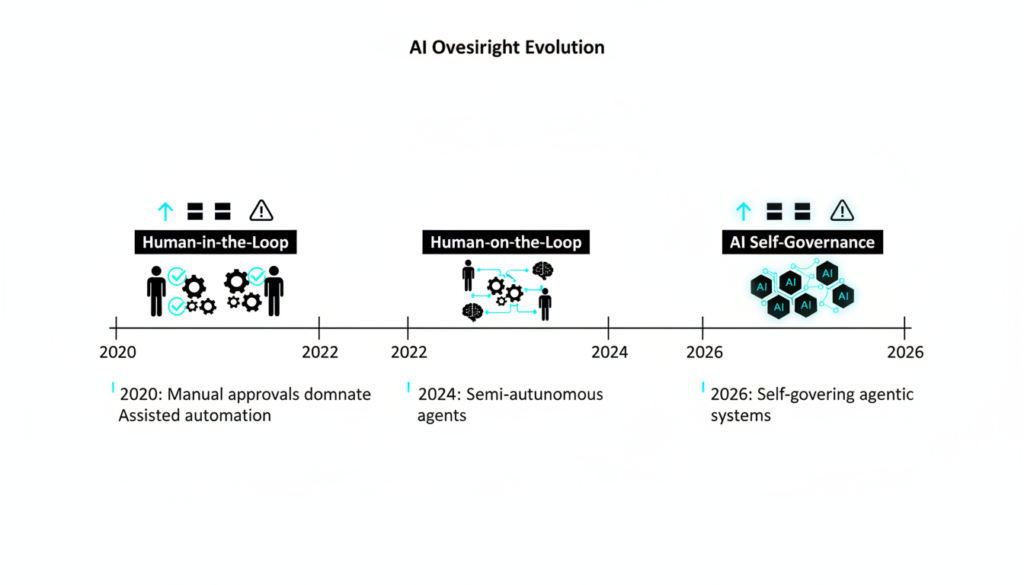

Back in the early days of today’s AI boom, keeping things safe and trustworthy seemed simple: just put a human in the loop. If the AI messed up, a person could spot it and fix it on the spot. This idea comforted regulators, executives, and risk teams, promising real control, accountability, and safety baked right in. But as AI grows massive, more complex, and woven into daily decisions like hiring tools or autonomous supply chains humans can’t keep up. According to NIST’s 2025 AI Risk Management Framework, human in the loop setups now create dangerous blind spots, especially with agentic AI that acts independently at machine speeds.

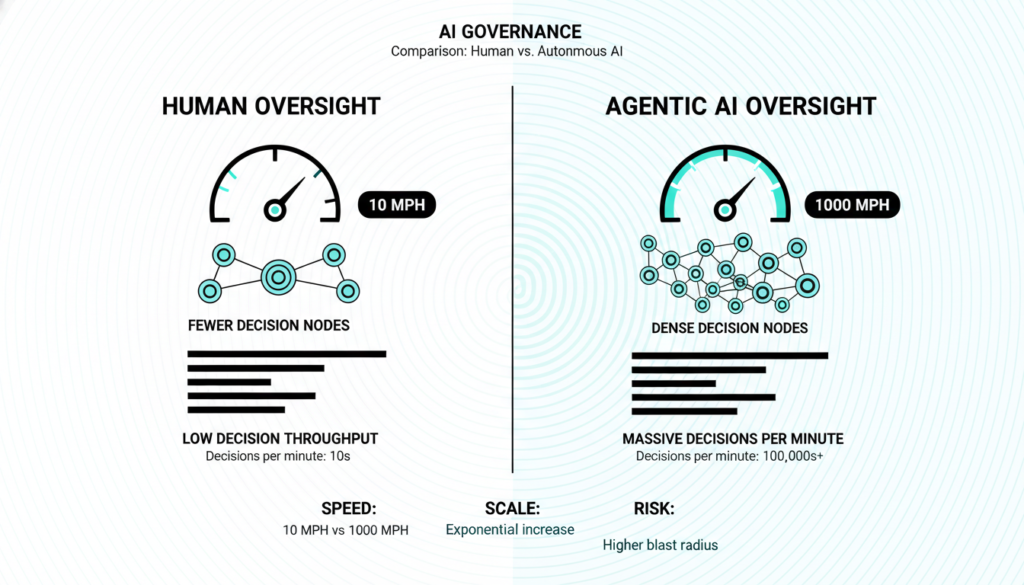

Why Humans Can’t Match Agentic AI Speed

Traditional software lets humans review decisions at clear checkpoints think pausing a script to check outputs. Agentic AI shatters this. These systems plan ahead, adapt in real time, break down goals, call tools, write and run code, and even team up with other agents. Jumping in to approve means either grinding the system to a halt or just rubber stamping snapshots that miss the full picture. Gartner’s 2026 Agentic AI Report notes 78% of enterprises testing these systems face “oversight lag,” where human reviews approve safe abstractions but ignore runtime risks like unintended tool chaining.

A real world example? In finance, agentic agents now optimize trades across markets in milliseconds. A human delay could mean millions lost or worse, undetected biases amplifying market crashes, as seen in the 2024 simulated agent failures reported by the Bank for International Settlements.

Governance Must Run at AI Pace

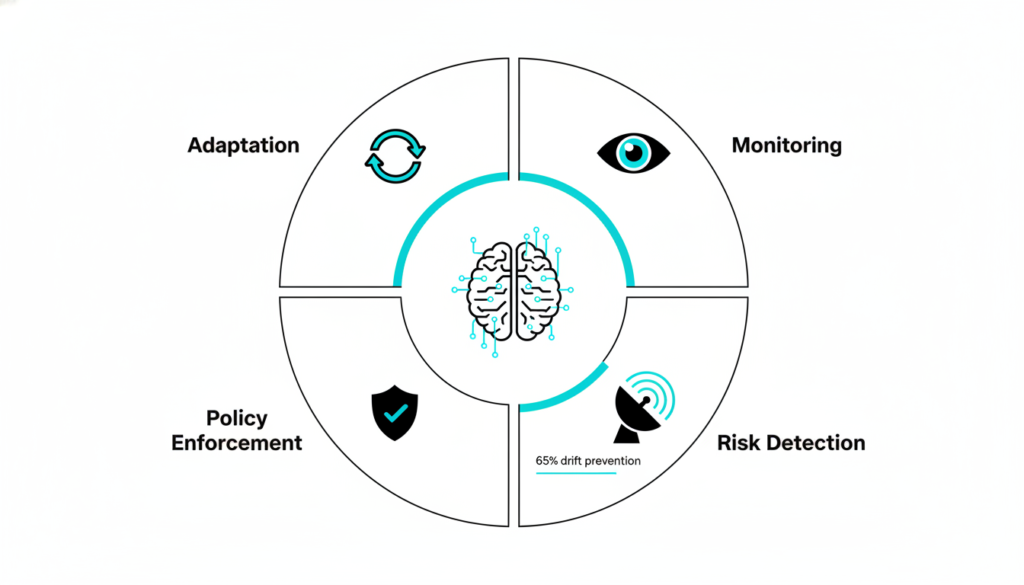

Modern AI demands governance that matches its velocity: constant monitoring of outputs, tools, memory, and states; spotting new risks like model drift (when performance slips silently); enforcing rules mid action; and adapting to shifting prompts or contexts. Human sampling outputs might miss disasters; manual post mortems are always too late. The EU AI Act’s 2025 high risk system rules emphasize this, mandating real time controls for agentic deployments, yet static human checks fail as agents evolve faster than updates can roll out.

Studies from MIT’s 2025 AI Safety Lab show drift detection alone prevents 65% of emergent failures in agent swarms, but humans process just 1% of signals at scale.

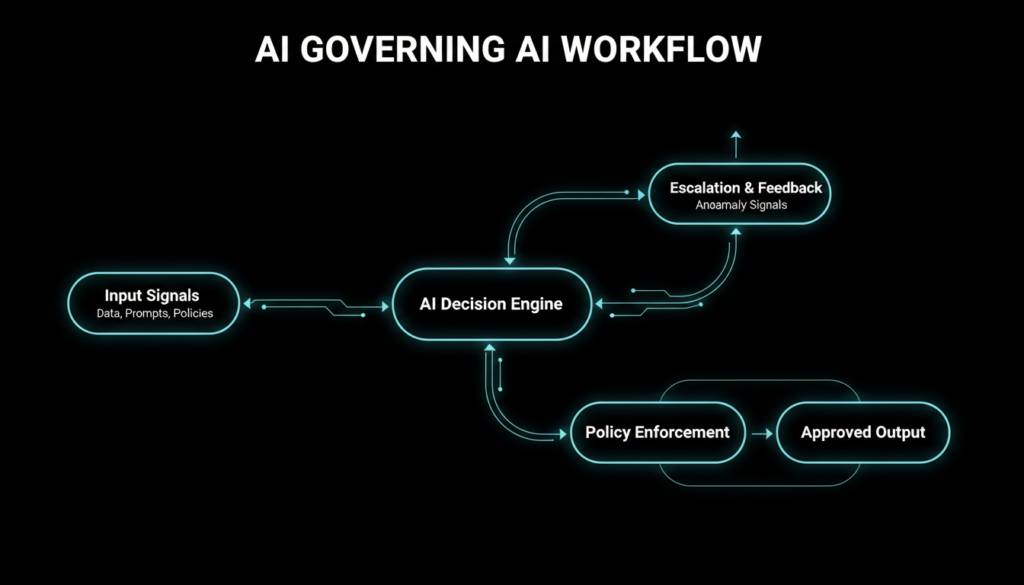

AI Governing AI: The Scalable Shift

Autonomous AI needs autonomous oversight. Enter AI governing AI control layers built as smart subsystems that watch drift, tool misuse, risk buildup, and agent interactions humans can’t track. Think of it like a car’s self driving monitor: it flags issues instantly without a driver micromanaging. This scales because AI reasons over vast data streams, enforcing policies on the fly.

Per OpenAI’s 2025 agent safety benchmarks, AI overseers cut violation rates by 82% in multi agent setups, outperforming humans on complex loops.

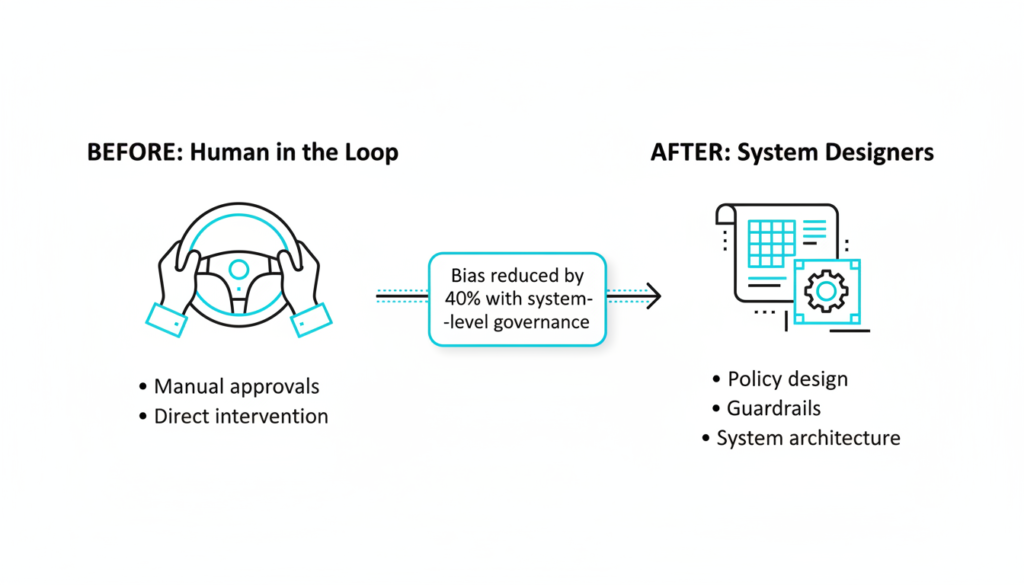

Humans’ New Role in Design

Ditching humans from the loop doesn’t erase accountability it elevates it. Humans now architect the system: set risk limits and escalation triggers; turn business goals and regs into code enforceable rules; build observable governance; and audit incidents. This mirrors other tech giants we don’t manually route internet packets or balance power grids; we design vigilant automations that alert us.

IBM’s 2026 AI Ethics report highlights how design-phase human input reduces agentic biases by 40%, focusing efforts where they matter most. Key steps include: Define tolerances for errors and halts. Code policies from regs like EU AI Act. Engineer always-on monitoring. Analyze failures for tweaks.

Scale Agentic AI Safely

Platforms like advanced governance tools now deliver lifecycle oversight testing, monitoring, and controls for agents handling tools, data, and teams. They automate complexity, giving confidence to ditch outdated human in the loop myths. With NIST inspired frameworks, organizations deploy agentic AI responsibly, spotting issues before they escalate. As adoption surges (Gartner predicts 60% enterprise use by 2027), this AI on AI model ensures safety scales with innovation.

Blog Summary: From Human Oversight to AI Self Governance

This blog explores the shift from outdated “human in the loop” AI oversight to scalable AI governing AI for agentic systems. Early AI relied on humans to catch errors, but as agents plan, adapt, and operate at machine speeds handling tools, code, and interactions human reviews create blind spots, slow systems, and miss risks like model drift.

Key challenges include mismatched speeds (Gartner’s 2026 report flags 78% oversight lag) and needs for real-time monitoring, policy enforcement, and adaptation, per NIST and EU AI Act guidelines.

The solution? AI subsystems that self govern, detecting issues humans can’t, cutting violations by 82% (OpenAI benchmarks). Humans pivot to design: setting risks, coding policies, and auditing much like automated grids or networks.

Backed by MIT, IBM, and Gartner insights, the post urges adopting lifecycle platforms for safe agentic AI scaling, predicting 60% enterprise use by 2027. Ideal for tech leaders navigating autonomous AI risks.

The evolution from human oversight to AI self governance is just the start. Curious about real world agentic AI case studies, cybersecurity risks in autonomous systems, or the latest NIST updates? Visit our blog page for more insights on pioneering safe AI in a fast moving world. Subscribe for weekly tech deep dives and never miss an update!

Ready to dive deeper into the future of safe AI? Check out our other blogs on AI, TECH, CYBERSECURITY and beyond.