From the outside, Physical Intelligence’s San Francisco office could be any other warehouse style building. The only real clue is a small pi symbol on the door, slightly off color from the rest of the entrance. Step inside, though, and the space feels less like a polished startup HQ and more like a working robotics lab in full motion.

Long wooden tables stretch across a big concrete room. Some double as a casual lunch area, scattered with Girl Scout cookies, jars of Vegemite, and overflowing condiment baskets. The rest of the tables are covered in monitors, robot arms, cables, and half finished experiments. This is where Physical Intelligence is quietly building what many in Silicon Valley see as the next major leap in AI: a general “brain” for robots.

Robots Learning Everyday Tasks

At any given moment, several robotic arms are attempting to master everyday household jobs. One arm struggles to fold a pair of black pants, making slow, awkward adjustments. Another tries to turn a shirt inside out and keeps failing, but persists with small incremental improvements. A third arm appears more confident, quickly peeling a zucchini and dropping the shavings into a nearby container.

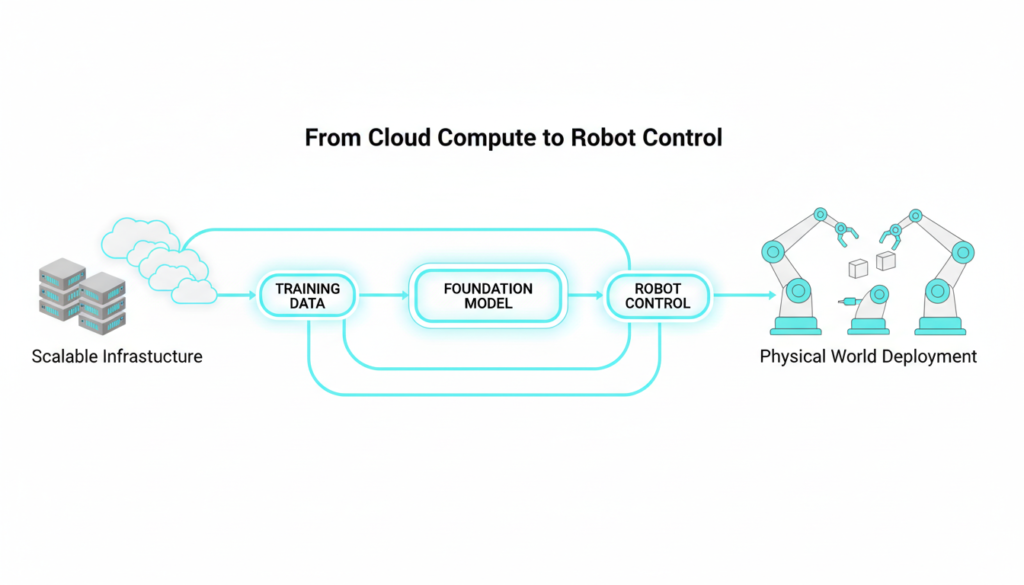

Co founder and UC Berkeley professor Sergey Levine describes this setup as something like “ChatGPT, but for robots.” Instead of generating text, these systems generate physical actions in the real world. The arms you see are part of a continuous training loop: robots collect data from stations in offices, warehouses, and test kitchens, and that data feeds large robotic foundation models. New models return to these stations for evaluation, where each arm might be running a different experiment.

Why Cheap Hardware And Smart Software Matter

One of the surprising things about Physical Intelligence’s lab is how unglamorous the hardware looks. The robot arms cost around 3,500 dollars each at retail, and the founders say the material cost could drop under 1,000 dollars if they built them in-house. A few years ago, most roboticists would have doubted that such affordable hardware could handle complex manipulation at all.

This is exactly the point: the company is betting that strong intelligence can compensate for relatively cheap hardware. Instead of hand-engineering a specialized robot for one task, they are building software so general that it can adapt to many different robot bodies and many different environments. In their test kitchen, for example, robots practice tasks like making coffee on an espresso machine. Every attempt — successful latte or messy failure — becomes valuable data to improve the model.

The Investor Behind The Vision

Among the people moving through the lab is investor and co-founder Lachy Groom. Originally from Australia, he sold his first company as a teenager and later joined Stripe early, before turning to angel investing in companies like Figma, Notion, and Ramp. Robotics, however, pulled him back toward building.

Groom began closely following the academic work of Sergey Levine and Stanford professor Chelsea Finn, both known for cutting-edge research in robotic learning. When he learned they might start a company with Google DeepMind researcher Karol Hausman and others, he quickly got involved. For Groom, Physical Intelligence matched a rare combination: the right idea, at the right time, with the right team a mix he says is far harder to find than most people think in Silicon Valley.

Billions Raised, But Not In A Hurry To Sell

In a short span, Physical Intelligence has raised more than 1 billion dollars at a multibillion-dollar valuation. Most of that money goes into compute the large scale cloud and GPU resources needed to train general-purpose robotic models on huge volumes of data.

What’s unusual is what the company does not promise its investors: a clear commercialization timeline. Groom is explicit that he does not give firm answers on when or how the company will make money. Instead, the focus is on research and building the strongest possible foundation model for robots, with the belief that valuable applications will follow once the core intelligence is powerful enough.

Cross Embodiment Learning: One Brain, Many Bodies

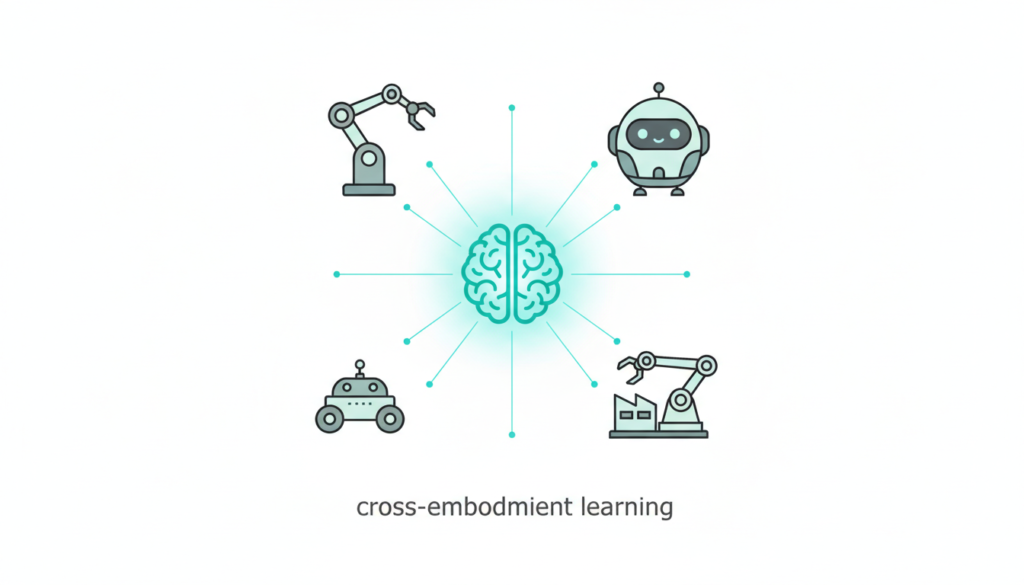

Co-founder Quan Vuong, who also came from Google DeepMind, describes the core technical strategy: cross-embodiment learning. In simple terms, the model learns from robots with different shapes, sensors, and capabilities, then transfers that knowledge across hardware platforms.

If someone builds a new kind of robot tomorrow, they should not need to start from scratch. Instead, they can plug into a model that already understands core ideas like grasping, lifting, sorting, or peeling, and quickly adapt it. This dramatically lowers the marginal cost of bringing autonomy to new robot designs.

Today, Physical Intelligence already collaborates with a small number of companies across logistics, grocery, and even a nearby chocolate maker. In some narrow workflows, they claim their systems are already good enough for real-world deployment, even as the broader vision remains long-term.

Rivals And A Philosophical Divide

Physical Intelligence is far from alone in chasing a general robotic brain. Skild AI, based in Pittsburgh, has raised even more money and openly criticizes many robotics foundation models for relying too heavily on internet-scale vision-language training instead of physics-based simulation and real-world robotics data.

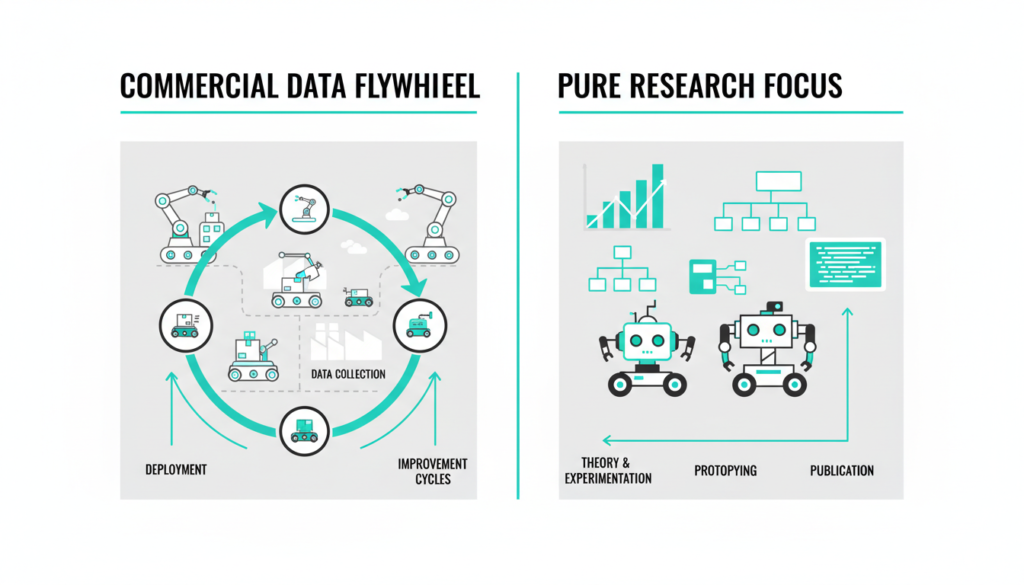

Skild AI has already commercialized its platform, reporting tens of millions in revenue from areas like security, warehousing, and manufacturing. Its argument is that deploying robots early creates a strong data flywheel: every real-world task completed feeds back to improve the model.

Physical Intelligence takes a different stance. The team believes delaying aggressive commercialization gives them room to optimize for generality and robustness instead of short-term revenue. In their view, solving “any platform, any task” at a deep level will ultimately be more defensible and more transformative, even if it takes longer.

Hardware Challenges And Real World Questions

Despite the massive funding and ambitious roadmap, daily life at Physical Intelligence is still full of very practical problems. Hardware breaks. New parts arrive late. Safety protocols slow down testing. Scaling from around 80 employees to a larger team must happen carefully, because robotics research is deeply interdisciplinary and coordination-heavy.

There are also broader questions from outside the lab. Will people want robots in their homes folding laundry or peeling vegetables? How will pets react to moving machines? Are these systems solving painful, valuable problems, or creating new ones around safety, jobs, and trust? And can a general robotic intelligence truly outperform specialized systems built for one narrow task?

Yet inside the company, the mood remains confident. The founding researchers have spent decades on this problem and believe the timing — thanks to advances in AI, cheaper hardware, and better simulation — is finally right. Silicon Valley has a long history of backing ambitious visions without clear near-term business models. Many of those bets fail, but the few that succeed have historically reshaped entire industries.

Physical Intelligence is a San Francisco robotics startup building general-purpose AI “brains” for robots, enabling them to handle diverse tasks like folding clothes or peeling vegetables using affordable hardware and massive data training. Led by UC Berkeley’s Sergey Levine and backed by investor Lachy Groom, the company has raised over $1 billion at a $5.6 billion valuation, focusing on cross-embodiment learning rather than quick commercialization.

Ready to dive deeper into cutting-edge tech? Check out our other blog posts on AI, Tech, and Cybersecurity right here on the page. From the latest AI breakthroughs to cybersecurity trends shaping tomorrow, we’ve got you covered!

Check out more on our blog page now → AI, Tech, and Cybersecurity