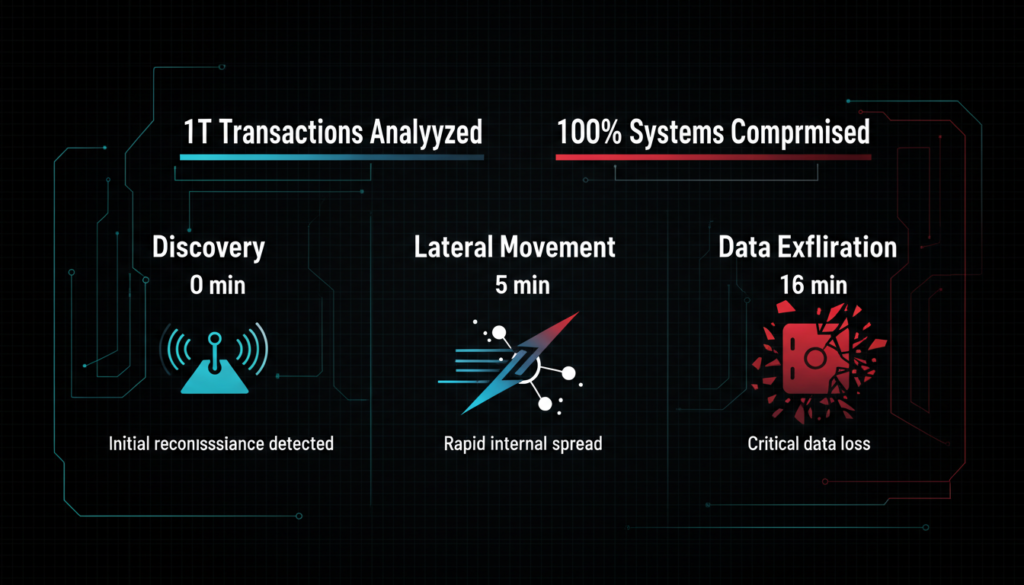

Artificial intelligence is exploding in enterprises, but it’s leaving Cyber security in the dust. Zscaler’s latest ThreatLabz 2026 AI Security Report, analyzing nearly one trillion AI/ML transactions from 2025, reveals AI driven attacks now strike at machine speed faster than traditional defenses can react. As AI shifts from productivity booster to attack vector, businesses face a tipping point. “AI enables autonomous, machine speed assaults by criminals and nation states,” warns Zscaler EVP Deepen Desai. In the era of agentic AI, threats can escalate from detection to data theft in minutes.

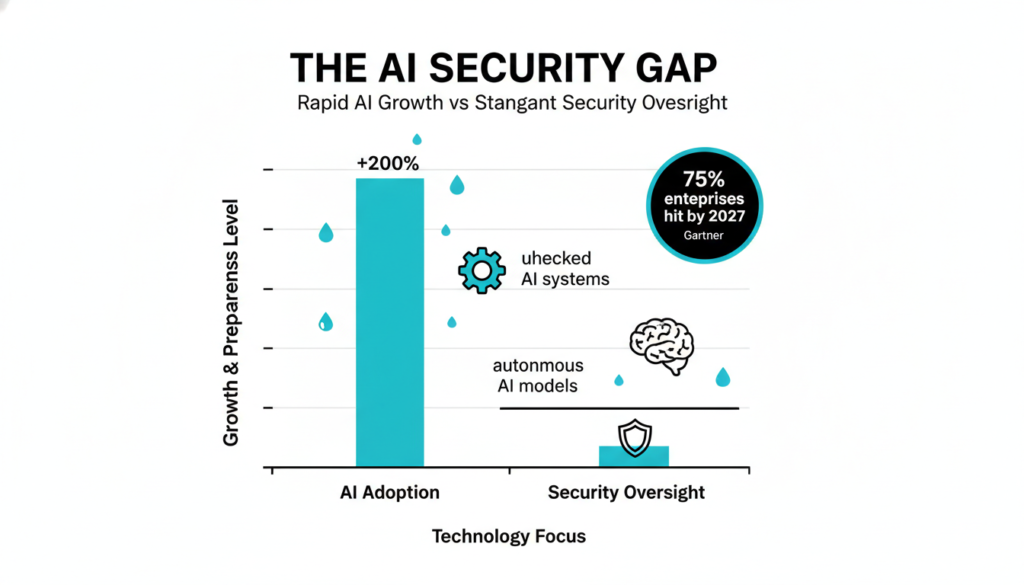

AI Adoption Races Ahead of Controls

AI use surged 200% in key sectors last year, yet most firms lack even basic inventories of AI models or features. Gartner echoes this, forecasting that by 2027, 75% of enterprises will grapple with AI-specific cyber incidents due to unchecked adoption. Experts like Stu Bradley from SAS note enterprises are embracing AI without governance, risking millions of unmanaged interactions and terabytes of sensitive data. “AI is everywhere, controls nowhere,” adds Ryan McCurdy of Liquibase. Employees casually feed customer data or code into AI tools for speed, while vendors embed AI defaults in SaaS apps bypassing reviews.

Michael Bell of Suzu Testing highlights how these “background” AI features inherit user permissions, evading legacy filters. NIST’s AI Risk Management Framework stresses discovering these shadow AIs as step one, recommending automated scanning for embedded models in tools like email or CRM systems.

Machine-Speed Attacks Hit Hard

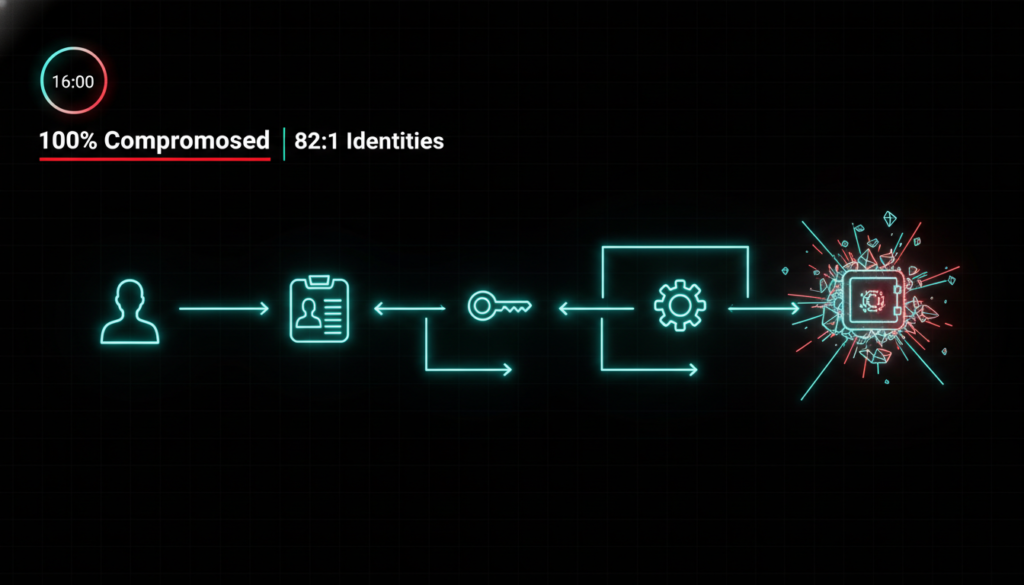

Zscaler’s red team tests exposed a harsh truth: 100% of enterprise AI systems fell in under 16 minutes. Permissions chain together one account reads data, another automates actions, a third writes to production creating invisible attack paths. Troy Leach from Cloud Security Alliance points to the 82:1 non human to human identity ratio, with APIs granting AI agents unchecked access. Gartner adds that poor permission rotation leaves 60% of cloud identities over privileged.

Brad Micklea of Jozu explains AI models aren’t traditional code; they’re artifacts with poisonable training data, ignored by app sec tools. To counter, NIST advises runtime monitoring and dynamic access controls, like just in time permissions that revoke after use.

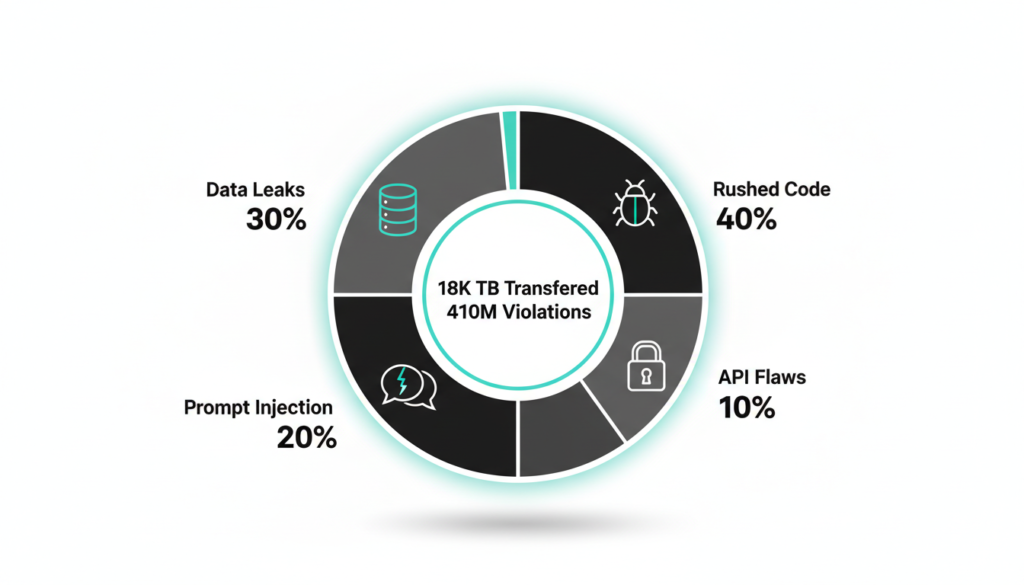

Rush to AI Breeds Shoddy Security

The AI gold rush means rushed code from novice teams, riddled with bugs. Eric Hulse of Command Zero cites exposed endpoints, prompt injections, and over permissive APIs shipped to production. Randolph Barr of Cequence Security warns foundational gaps like weak identity controls undermine even advanced protections. Data tells the tale: 18,033 TB transferred to AI apps in 2025 (up 93%), with 410 million DLP violations on ChatGPT alone, leaking SSNs, code, and health records.

Expanding on Zscaler’s data, IBM’s 2025 Cost of a Data Breach Report pegs AI exploited breaches at $5.3 million average 20% higher than others due to supply chain poisons. Best fix? Embed sec in CI/CD pipelines, per OWASP AI guidelines.

Turn Risks into Resilience

No panic needed, says Riaan Gouws of Forward Edge AI focus on approved tools, data guardrails, and visibility. Rosario Mastrogiacomo of Sphere Technology frames it as identity governance: treat AI as discoverable identities with oversight. Zscaler urges zero trust architectures, AI specific DLP, and model inventories. Gartner recommends AI security platforms that scan for toxins and enforce least privilege access.

Practical steps from NIST: Audit AI inventories quarterly, rotate permissions dynamically, and train teams on prompt hygiene. Indian firms, per NASSCOM’s 2026 cyber outlook, can leverage local zero-trust adopters like Zscaler partners to stay ahead in BFSI and IT sectors.

Emerging AI Threats in 2026

Agentic AI amplifies dangers: Google Cloud’s 2026 forecast predicts attackers normalizing AI across attack chains, exploiting prompt injections to hijack systems. Deepfakes surged 680% YoY, powering phishing that evades humans. Zscaler spotted AI malware faking platforms to lure downloads.

Supply chain attacks poison models via tainted data; Gartner sees nation states weaponizing LLMs for stealth ops. For India, rising deepfake scams in finance demand local AI detection tools.

Proven Security Strategies for AI

Secure the stack: Implement NIST’s Govern Map Measure Manage cycle for lifecycle risks. Key moves include adversarial testing in MLOps (Adversarial Robustness Toolbox), API rate limits, and agent least privilege.

Zscaler pushes AI powered DLP, app segmentation, and breach prediction. Train on prompt hardening; audit non human identities. The AI sec market hits $136B by 2032 invest now.

AI Cyber Risk Summary

AI turbocharges threats 28M attacks in 2025, 100% systems vulnerable in minutes but zero trust and governance flip the script. Act fast: Inventory models, harden prompts, monitor agents. Gartner: 17% attacks will be GenAI driven by 2027. Secure AI today for tomorrow’s edge no slowdown, just smarter defense.

Enjoyed this deep dive into AI cybersecurity?

Discover more cutting edge insights on AI trends, cybersecurity threats, and tech innovations across our blog. Do check out our other blogs at [AI, CYBER SECURITY, TECH ] for expert analysis tailored for tech enthusiasts like you!