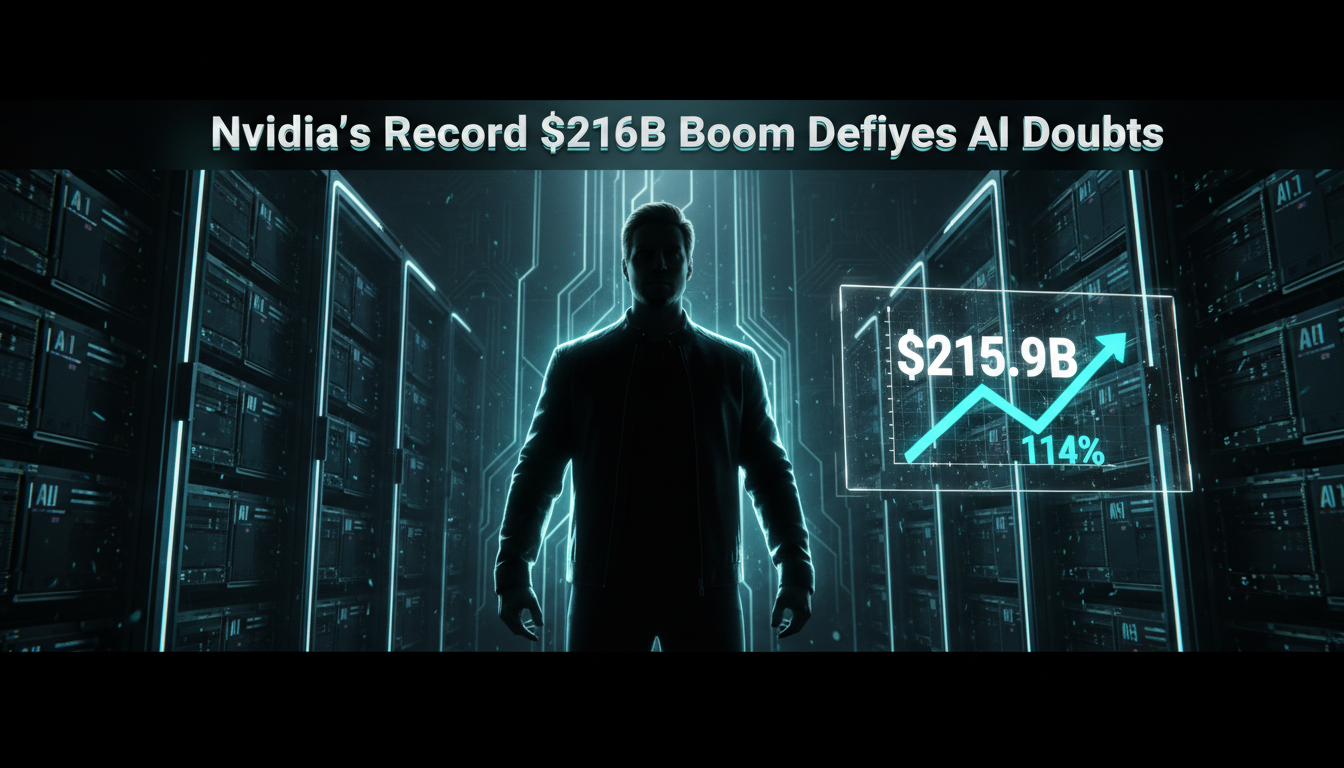

Nvidia just smashed expectations with a staggering $215.9 billion in annual revenue for fiscal year 2026 that’s a 114% jump from last year. Even as investors question the sustainability of massive AI spending, the chip powerhouse proved them wrong. In its latest quarter (ending January 2026), sales soared 73% year over year to $39.3 billion, crushing analyst predictions of $38.1 billion. CEO Jensen Huang called it a sign of “exponential computing demand,” with customers pouring billions into AI infrastructure.

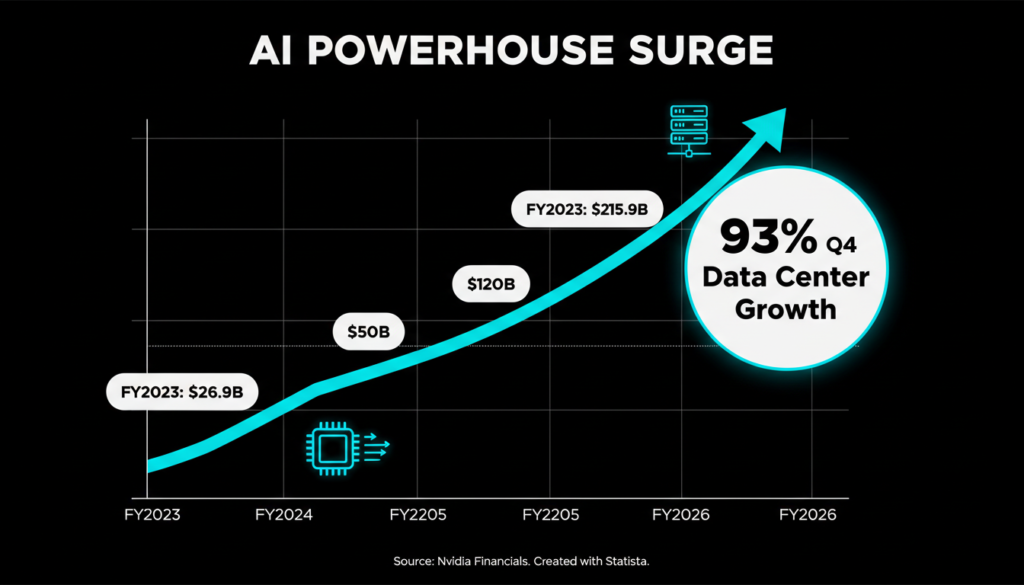

This isn’t just hype. Nvidia’s dominance stems from its GPUs, which power everything from ChatGPT training to drug discovery. Despite economic headwinds and AI fatigue talks, the company’s data center segment its cash cow hit $35.6 billion in Q4 alone, up 93% from prior year.

Why Investors Were Skeptical And Why They’re Wrong

Wall Street has grilled Nvidia over “circular financing” fears deals where the company invests in AI startups that buy its chips, potentially inflating demand signals. Critics worry this web of partnerships masks true market pull. But Nvidia’s results tell a different story: gross margins held steady at 73.5% in Q4, signaling genuine profitability amid surging orders.

Huang addressed this head-on: “Our customers are racing to build AI factories the backbone of the AI industrial revolution.” Big Tech validates it Microsoft, Amazon, Google, and Meta alone represent hyperscalers driving 70% of Nvidia’s data center revenue, per earnings calls. Bloomberg reports these firms plan $200B+ in capex for 2026, much tied to Nvidia silicon.

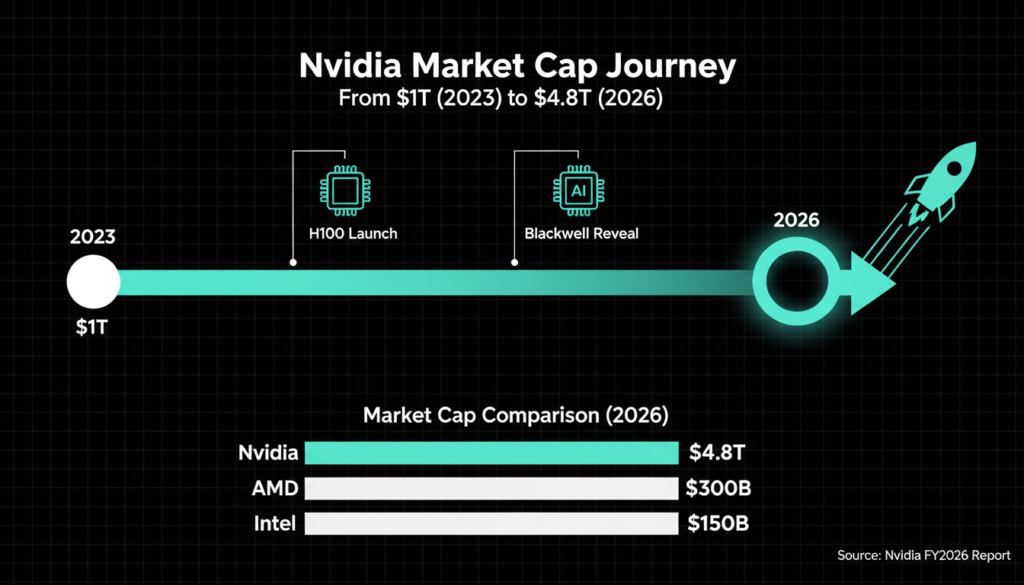

Nvidia’s Skyrocketing Valuation and AI Empire

At $4.8 trillion market cap, Nvidia eclipses Apple and Microsoft as the world’s most valuable public company. Its H100 and Blackwell GPUs are the gold standard for training massive AI models like those from OpenAI and Meta. Deepwater Asset Management’s Gene Munster nailed it on X: “AI is accelerating faster than non users can grasp.”

This buildout is a multi year marathon. Reuters estimates the AI infrastructure market will hit $1 trillion by 2030, with Nvidia capturing 80-90% GPU share. Competitors like AMD and custom chips from hyperscalers nibble at edges, but Nvidia’s CUDA software ecosystem locks in developers over 4 million now, per company stats.

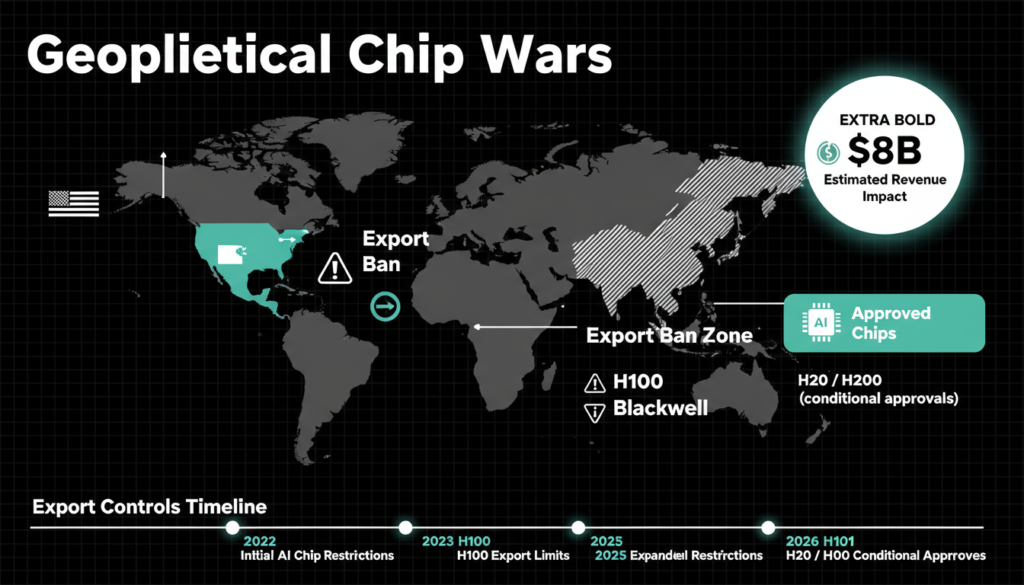

Navigating US-China Geopolitics

Geopolitics adds drama. Nvidia’s outlook skipped China revenue projections amid US export curbs. Last month, the Trump administration greenlit H200 chip sales to China under restrictions, but a Commerce Department official confirmed zero units shipped yet. This follows Biden-era bans on advanced A800/H800 exports, costing Nvidia $8B in prior quarters.

Still, Nvidia adapts: its China optimized H20 chips generated $15B+ in FY2026, per filings. Broader tensions could evolve Trump’s “America First” push might ease select sales, but Bloomberg warns of retaliatory Chinese tariffs on US tech.

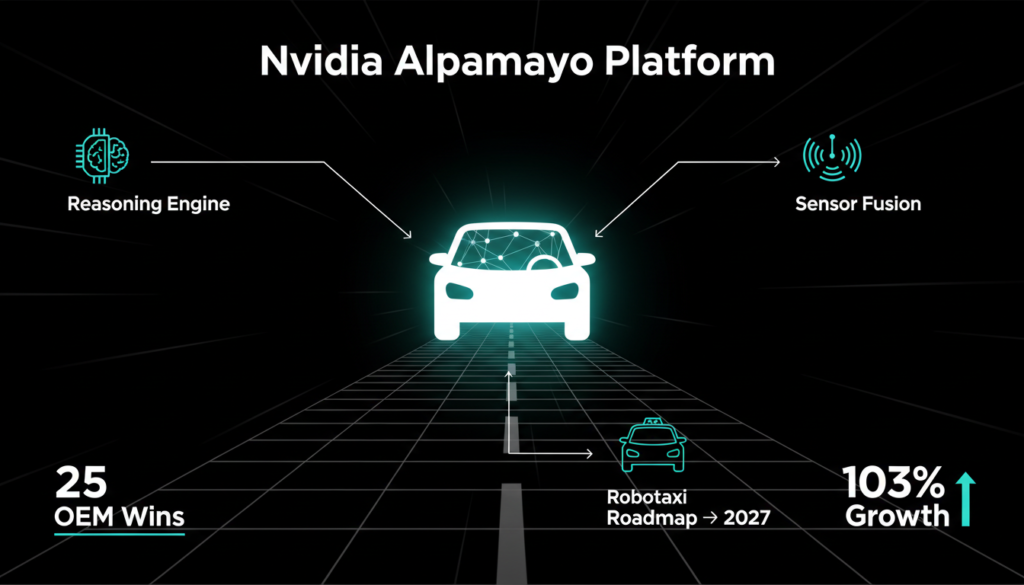

Expanding Beyond Chips: Robots, Cars, and More

Nvidia isn’t resting on silicon laurels. At CES 2026 in Las Vegas, Huang unveiled “Alpamayo,” an open source AI model infusing reasoning into self driving cars. Paired with the Drive Thor platform, it promises Level 4 autonomy cars handling complex urban driving sans human input.

Nvidia also teased a 2027 robotaxi launch with an unnamed partner (rumors point to Waymo or Uber). This automotive push is huge: the segment hit $570M in Q4, up 103%, fueled by 25+ OEM design wins.

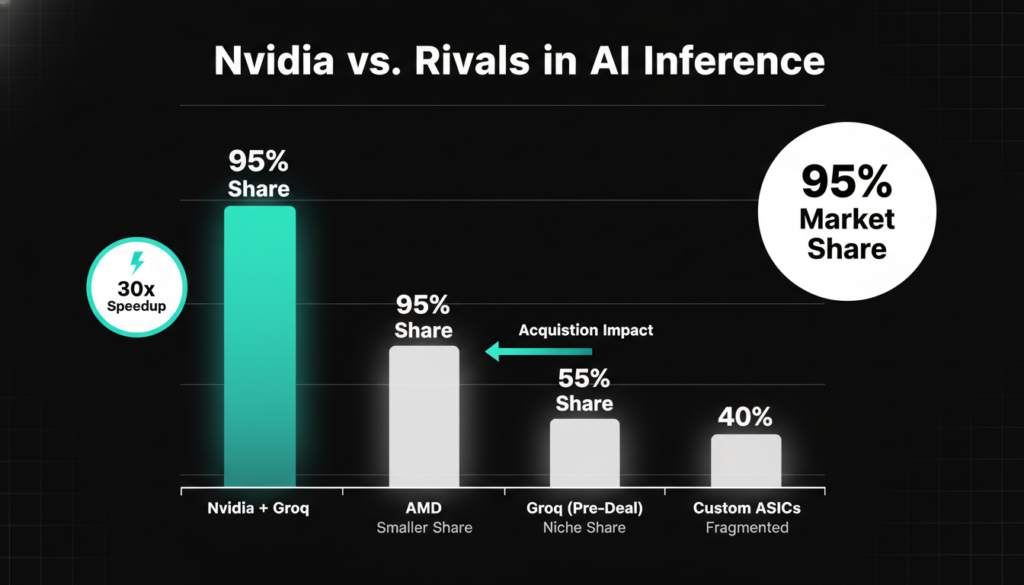

Crushing Competition in AI Inference

Nvidia leads AI training but faced heat in inference the phase where models process real-world queries. Enter the $20B Groq acquisition in Q4: Groq’s LPUs (Language Processing Units) excel at ultra-fast inference, slashing latency for chatbots and agents.

This bolsters Nvidia’s full stack play. Inference now drives 40% of data center workloads (up from 20% in 2024, per Gartner), and Groq tech integrates with Blackwell for 30x speedups. CEO Huang: “Inference is the new frontier our roadmap crushes it.”

Additional context: Nvidia’s Rubin architecture (successor to Blackwell) is slated for 2027, promising 4x inference performance via NVLink fusion. Competitors like Cerebras and SambaNova push wafer scale chips, but Nvidia’s 95% inference market share (IDC data) remains unchallenged.

Nvidia’s Bold 2026 Outlook

Looking ahead, Nvidia forecasts Q1 FY2027 revenue at $43B (±2%), implying 100%+ growth. Full-year guidance eyes $220B+, with Blackwell ramping to supply 1 million+ GPUs. Huang emphasized supply chain fixes: “Blackwell is in full production demand exceeds supply 10:1.”

Challenges linger inflation, energy costs for AI data centers (projected $100B/year by 2030, IEA), and regulatory scrutiny. Yet, with AI adoption exploding (enterprise AI spend up 84% YoY, per McKinsey), Nvidia’s moat hardware, software, ecosystem is ironclad.

The Bigger AI Revolution Picture

Nvidia’s surge spotlights AI’s transformation: from hype to trillion-dollar reality. Healthcare models cut drug discovery from years to months; finance AI spots fraud in milliseconds. But ethical questions loom energy use equals small countries, job shifts accelerate.

For investors, Nvidia stock (NVDA) trades at 50x forward earnings pricey, but justified by 100%+ growth. Diversify via ETFs like QQQ, but Nvidia remains the pure play bet.

In summary, Nvidia didn’t just beat estimates it redefined AI economics. As Huang says, “The compute era is here.” Stay tuned for Blackwell’s ripple effects.

Check out more on our blog page now → AI, Tech, Cybersecurity