AI is exploding in workplaces, but security can’t keep up. New research from Cyberhaven reveals nearly 40% of employee AI interactions now involve sensitive corporate data like source code, HR records, or sales intel. Workers are dodging official tools for powerhouses like Claude and DeepSeek, splitting companies into bold “frontier” adopters and cautious “laggards,” losing grip on their data.

Cyberhaven Labs tracked this via real-time data lineage across endpoints, SaaS apps, and AI tools. Growth often trumps security, creating a Wild West where tools spread faster than policies.

AI Tool Explosion Overwhelms Networks

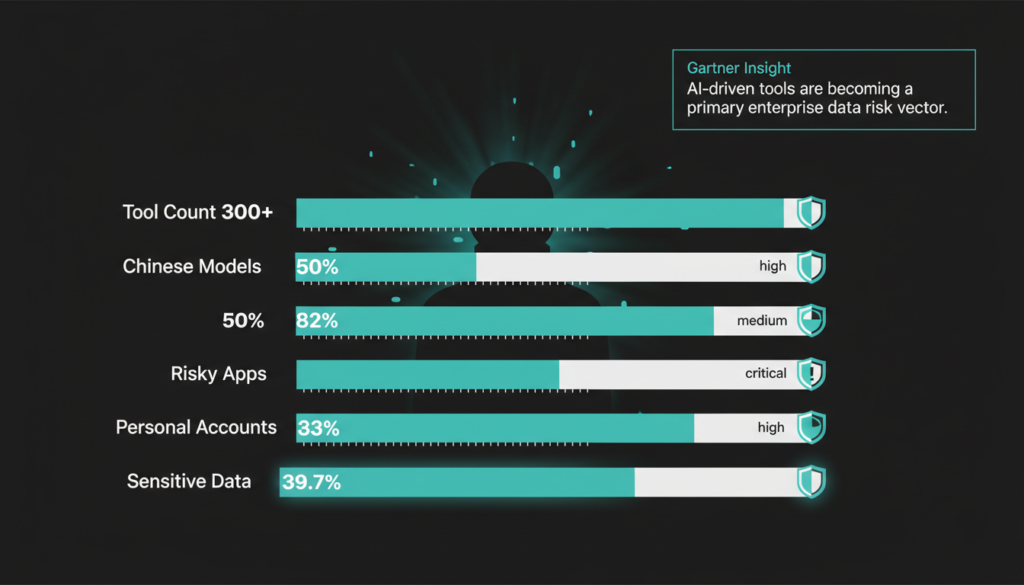

Frontier firms deploy 300+ GenAI tools to 70% of staff, while laggards hover at 2%. Employees, chasing productivity, build shadow AI setups bypassing controls. Cyberhaven’s top findings:

- High-adopters use over 300 GenAI tools enterprise-wide.

- Chinese open-weight models (e.g., DeepSeek) claim 50% of endpoint usage.

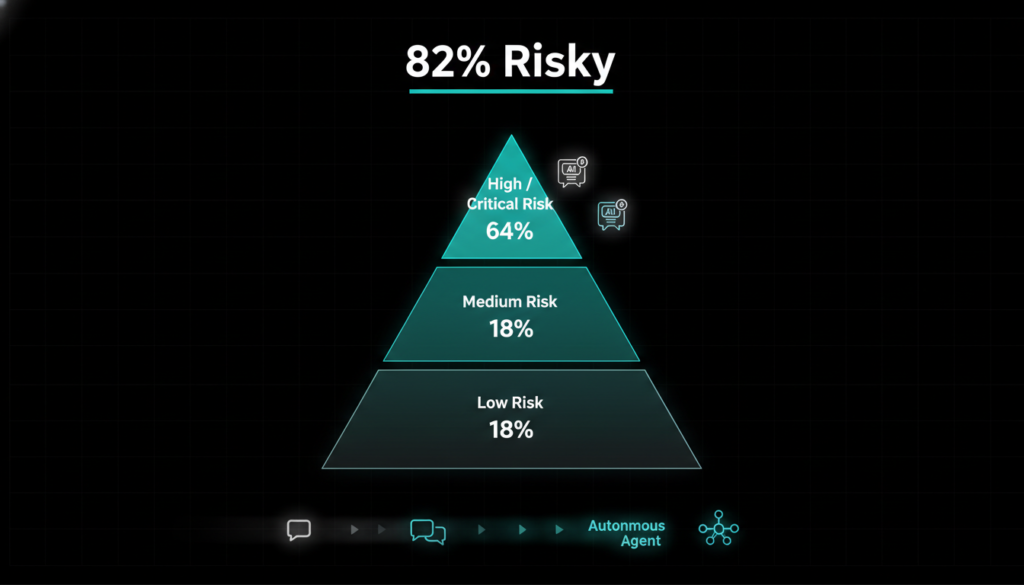

- 82% of top 100 GenAI SaaS apps rate medium, high, or critical risk.

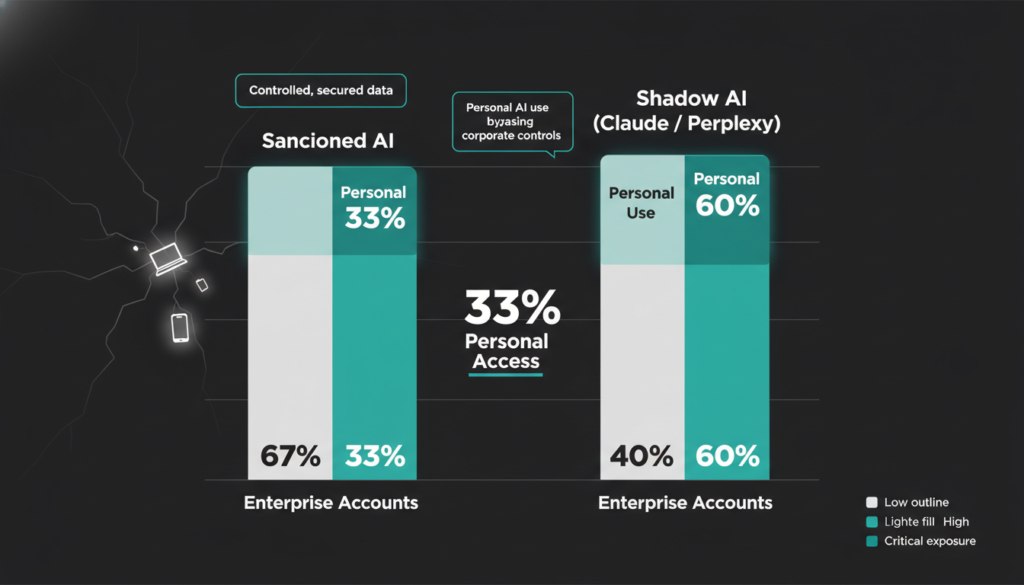

- One-third of staff access tools via personal accounts, fueling shadow AI.

- 39.7% of AI interactions feed in sensitive data.

Gartner’s 2025 AI Security Report echoes this, warning 45% of enterprises grapple with unmanaged shadow AI, amplifying breach risks by 30%.

Frontier vs. Laggard Divide

Frontier leaders push official AI strategies, fostering experiment-friendly cultures. Laggards cling to “block first” mindsets, tangled in legacy systems and trust gaps seeing AI as a threat, not a booster.

Cyberhaven CEO Nishant Doshi notes: “Frontier firms enable secure productivity; laggards manage risks reactively.” IBM’s 2025 Cost of a Data Breach Report ties this to finances: AI-fueled breaches now average $4.88 million, a 10% jump, often from unmonitored tools.

Chinese Models Storm Enterprises

Chinese open weight models like DeepSeek-R1 (launched Jan 2025) now dominate 50% of endpoint AI use, rivaling U.S. leaders in coding tasks. Employees prioritize performance over geopolitics, slipping past filters.

Doshi highlights LMArena benchmarks favoring these models. NIST’s AI Risk Framework stresses monitoring foreign models to curb supply chain risks, as they’ve spiked 40% in enterprise footprints per recent scans.

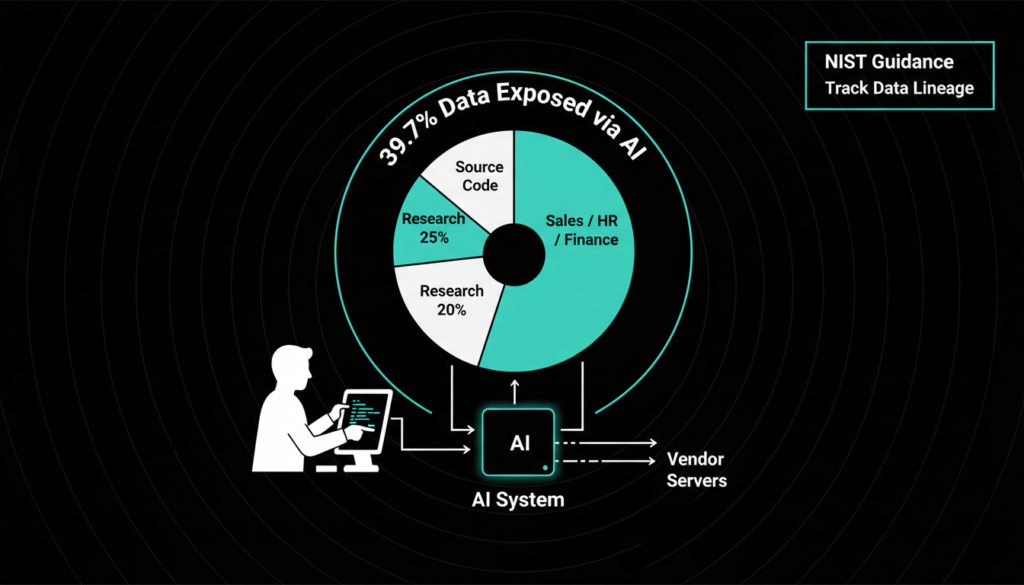

Workers Unwittingly Leak Secrets

A shocking 39.7% of AI prompts include sensitive info source code, R&D notes, finance docs. Many don’t grasp data leaves corporate control, especially with vendors training on user inputs.

Lack of awareness drives “block-first” policies. Doshi warns: “Employees blur ‘sensitive’ lines in daily workflows like CRM.”

Personal Accounts Fuel Shadow AI

One third use personal logins for Claude (top coder) and Perplexity (AI search), beating clunky corporate options. Shadow AI proliferates derivative data copies at machine speed.

Doshi urges robust governance. This aligns with Gartner’s shadow AI surge, where personal tools evade 60% of detections.

Most GenAI Apps Are Risky

82% of top 100 GenAI SaaS tools score medium to critical risk, per Cyberhaven yet employees flock for niche perks like NoteGPT’s instant summarizers, skipping prompt expertise.

Doshi: Enterprise tools lag developer innovations. Shift favors embedded agents over chatbots, booming among engineers.

AI’s Workplace Future

AI adoption will surge track apps per employee and usage rates for productivity wins. 2025 was agent year one; 2026 ramps up. Doshi: “Secure now, or regret later.”

NIST advises lifecycle tracing; pair with tools like Cyberhaven for control.

AI Risks: 40% Employee Use Shares Sensitive Data

Cyberhaven’s latest research uncovers a stark reality: nearly 40% of employee AI interactions involve sensitive corporate data, like source code and HR files, as workers bypass sanctioned tools for Claude, DeepSeek, and others. This “Wild West” splits firms into frontier adopters (300+ tools, 70% workforce) and laggards (2% adoption), with Chinese models grabbing 50% endpoint share and 82% of top GenAI apps rated high-risk.

Shadow AI thrives via personal accounts (33% usage), fueling unmonitored data leaks amid low awareness. CEOs urge data lineage tracking and governance to harness AI safely, as breaches now cost $4.88M on average per IBM stats.

Loved this dive into AI’s hidden data dangers? Fuel your tech curiosity visit our other blogs on AI breakthroughs, CYBER SECURITY defenses, and cutting edge TECH trends! 🚀