London based startup Mirai is pushing the boundaries of AI by optimizing how models run directly on smartphones and laptops, moving beyond cloud dependency. Founded by serial entrepreneurs Dima Shvets and Alexey Moiseenkov, the company just raised $10 million in seed funding to make on device AI faster, private, and developer friendly. This shift addresses skyrocketing cloud costs, where inference expenses can consume 40-60% of AI operational budgets for many startups, making edge computing not just a luxury but a necessity for sustainable growth.

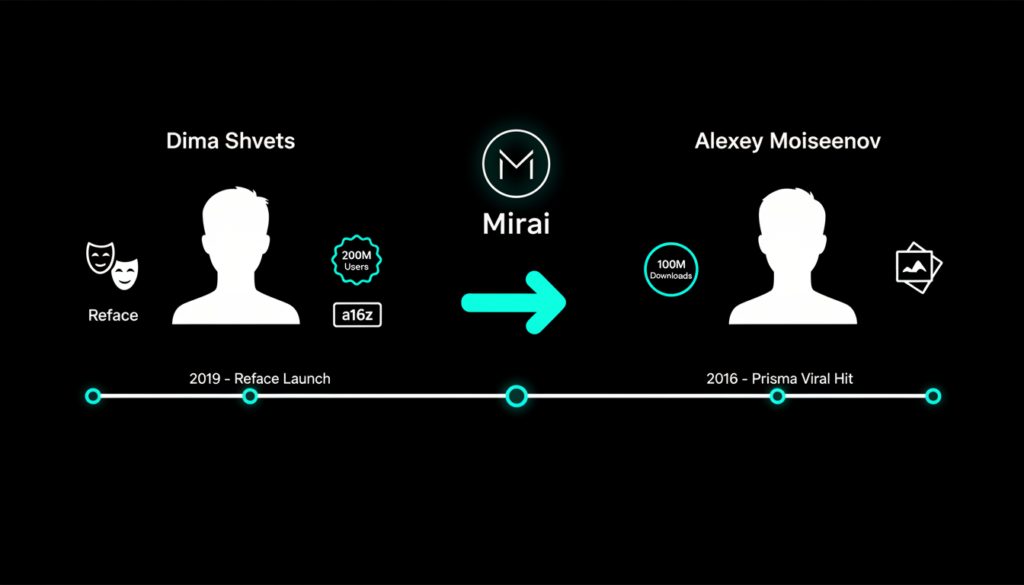

Founders’ Proven Track Record

Dima Shvets co-founded Reface, the viral face swapping app that amassed over 200 million users and secured backing from Andreessen Horowitz (a16z). Alexey Moiseenkov, meanwhile, led Prisma Labs, creators of the hit AI photo filter app with 100 million downloads that turned images into artistic masterpieces using neural styles. These consumer hits honed their skills in scalable AI apps long before generative AI hype.

Meeting in London, the duo spotted a gap: while everyone buzzes about cloud servers and AGI, consumer hardware’s on-device potential remains untapped. Developers crave cost savings per token and seamless complex tasks on phones, driving Mirai’s birth. Their prior successes taught them the power of intuitive AI interfaces Reface hit 100 million users in months by simplifying face swaps, while Prisma pioneered neural style transfer that influenced tools like Adobe’s Content Aware Fill.

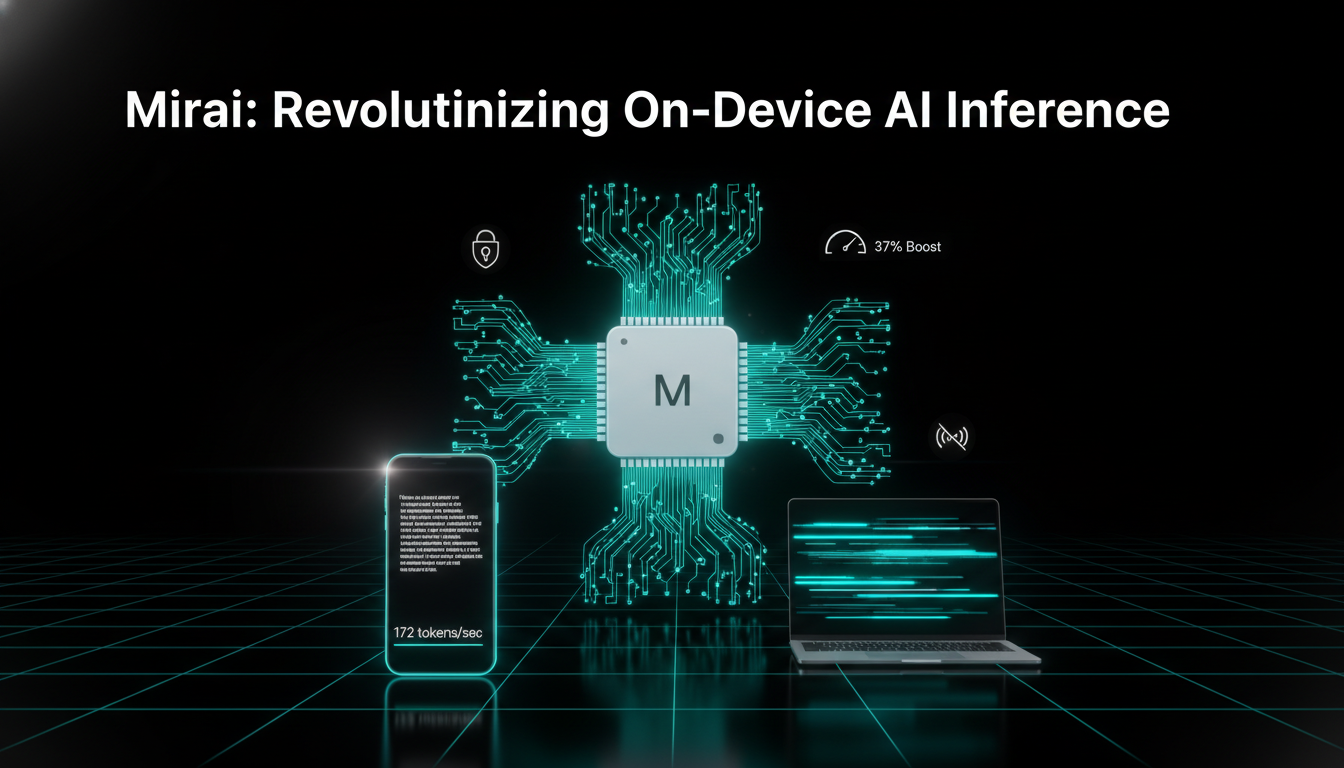

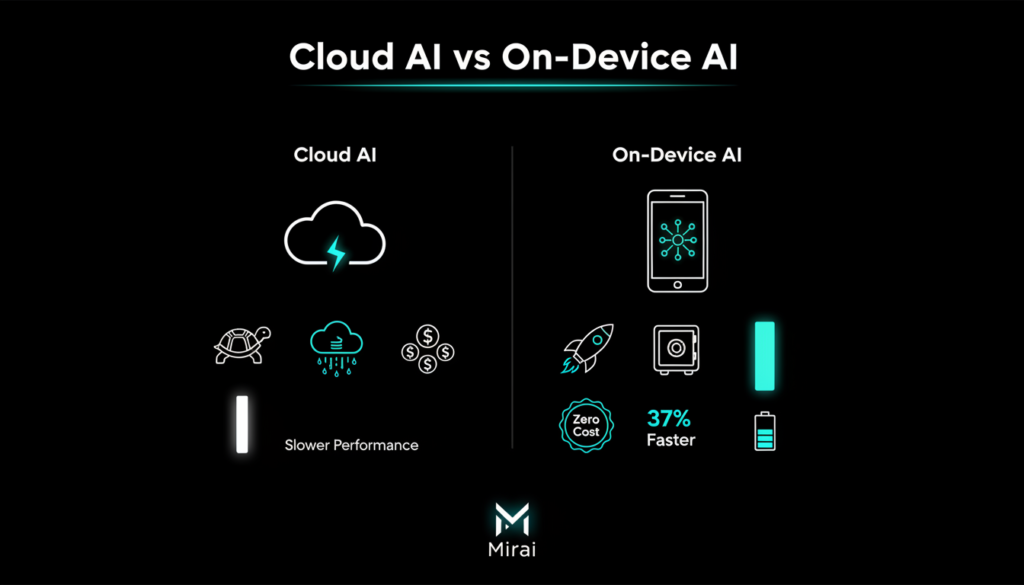

Why On Device AI Matters Now

Running AI inference on device slashes latency to near zero, boosts privacy by keeping data local, and enables offline functionality crucial for real time apps like chatbots or voice assistants. Unlike cloud setups plagued by high costs and network delays, edge computing leverages powerful chips in modern devices like Apple Silicon. For users in regions with spotty connectivity, like parts of India, this means reliable AI without buffering vital for education apps, language translation, or health diagnostics.

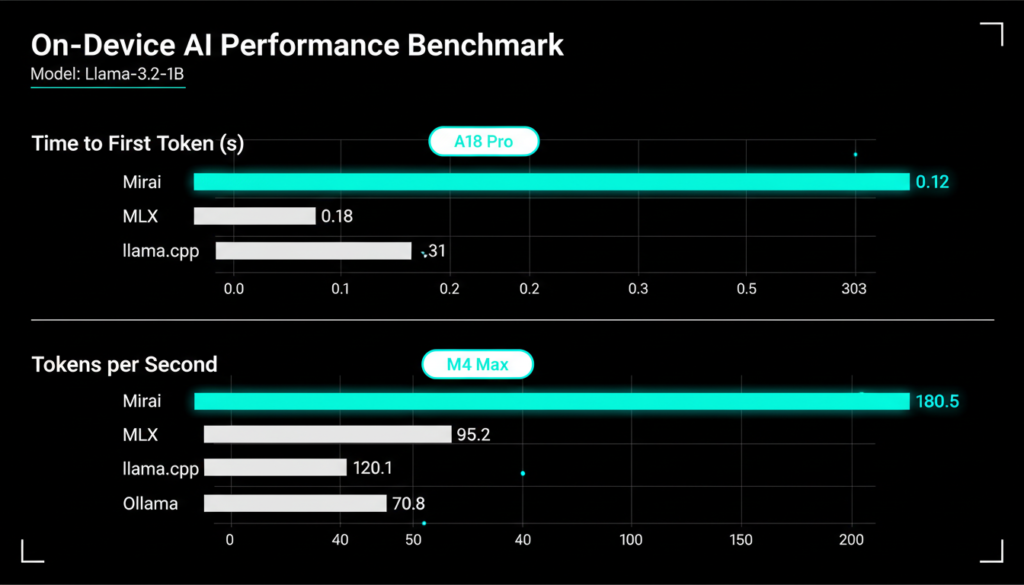

Challenges include battery drain, memory limits, and model size vs. accuracy trade offs, but solutions like quantization and hardware aware optimization are emerging. Mirai addresses these head on, outperforming rivals like Apple’s MLX and llama.cpp in benchmarks on M series chips. Industry trends show on-device AI growing at 35% CAGR through 2030, driven by privacy regulations like GDPR and India’s DPDP Act that favor local processing.

Technical Edge: Rust-Powered Engine

Mirai’s core is a Rust built inference engine tailored for Apple Silicon, boosting model speeds by up to 37% without altering weights preserving output quality. Their upcoming SDK promises Stripe like simplicity: integrate with just eight lines of code for tasks like summarization or classification. Rust’s memory safety prevents crashes common in C++ engines, making it ideal for consumer apps handling sensitive user data.

Currently targeting text and voice, with vision on deck, it supports top models like Llama, Gemma, and Qwen. An orchestration layer smartly routes tough queries to the cloud, blending local and remote seamlessly via the same API partnered with Baseten. Plans include Android expansion, chipmaker collaborations, and public benchmarks to standardize on device testing. Benchmarks on devices like iPhone 16 Pro Max show time to first token as low as 0.041s and 172 tokens/sec on M4 Max. This hybrid model ensures scalability, processing 80% of queries locally while offloading the rest efficiently.

Funding and Stellar Backers

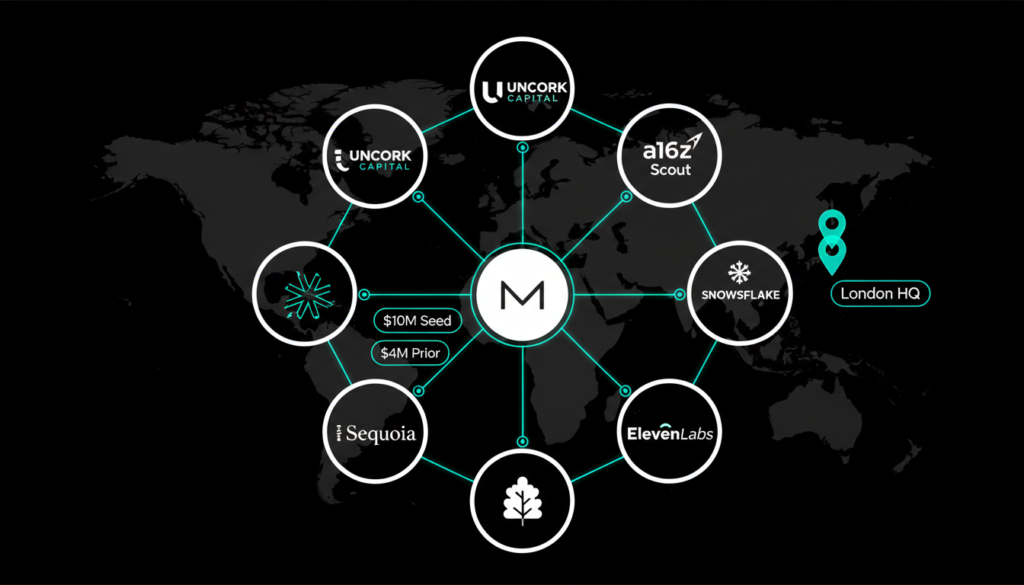

Uncork Capital led the $10M seed, with notable angels like Snowflake co-founder Marcin Żukowski, Coinbase board member Gokul Rajaram, and Scooter Braun. Earlier rounds included $4M from Sequoia and Index Ventures scouts. Investor Andy McLoughlin sees parallels to past edge ML wins, predicting a shift as cloud costs soar: “VCs fund rocketships now, but economics will force edge adoption”.

“Every model maker will run inference at the edge,” he notes, positioning Mirai to capture this trend amid unsustainable cloud spends. Backers bring expertise Rajaram scaled Google Ads to billions, while Singleton built Dreamer’s real time AI, aligning perfectly with Mirai’s vision. Total funding now exceeds $14M, fueling a 14-person team focused on talent-dense innovation.

Real World Applications and Use Cases

Mirai’s engine unlocks transformative apps: on device assistants like personalized tutors analyzing notes offline, real time transcribers for meetings in noisy environments, or translators handling dialects without internet. For content creators, it enables instant video summarization or script generation directly in editing apps. Healthcare sees potential in privacy first symptom checkers, while gaming could feature adaptive NPCs powered locally for seamless multiplayer.

Developers benefit from granular controls like token-based pricing, model versioning, and A/B testing all without cloud vendor lock in. Early adopters report 5x cost reductions versus API calls, with seamless scaling. In India, where 600M+ smartphone users face data caps, Mirai like tech could power vernacular AI for farmers tracking crops or students accessing edtech.

Future Roadmap and Industry Impact

Mirai’s roadmap targets multimodal support (text, voice, vision) across Apple and Android, with benchmarks becoming industry standards like GLUE for NLP. Collaborations with model providers tune frontier LLMs for edge, while chip talks ensure compatibility with Qualcomm Snapdragon and MediaTek. Their goal: default on-device execution, cutting global AI carbon footprint by shifting from power-hungry data centers.

As edge AI matures, expect integrations in iOS 20 and Android 16, amplifying features like Live Translate or Siri. For developers worldwide, Mirai democratizes advanced AI, leveling the playing field against Big Tech. Careers page hints at rapid hiring, signaling aggressive expansion

Check out more on our blog page now → AI, Tech, Cybersecurity