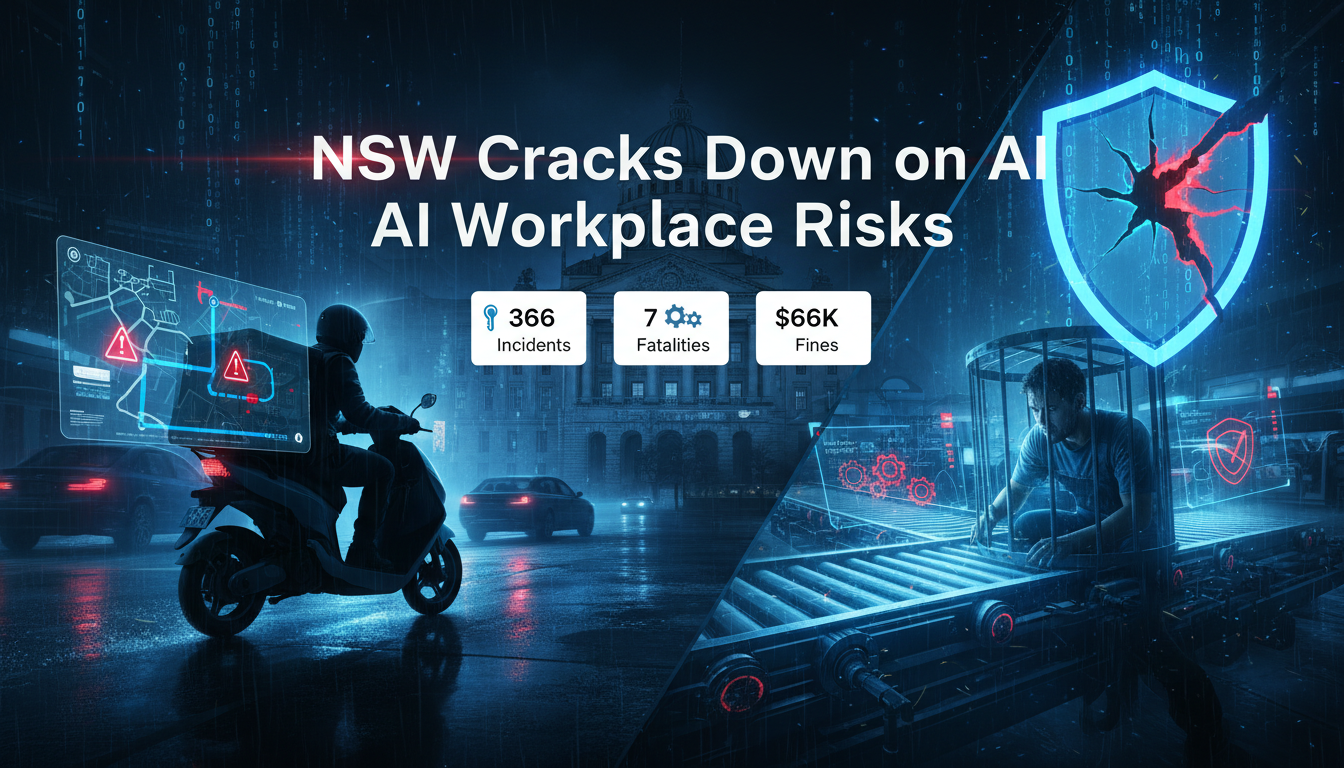

New South Wales (NSW) is pioneering laws to protect workers from AI’s hidden dangers, making employers directly liable for harms from algorithms and digital tools. This bold step addresses gig economy perils and warehouse pressures, but it’s igniting fierce debates on business costs and union power.

Bill Targets AI and Algorithm Harms

The Work Health and Safety Amendment (Digital Work Systems) Bill 2025/26 explicitly holds employers accountable for physical injuries, mental health strain, or other harms stemming from AI systems, mobile apps, or algorithmic decision making. This covers high stakes environments like ridesharing, food delivery, and automated warehouses, where opaque algorithms often prioritize speed over safety.

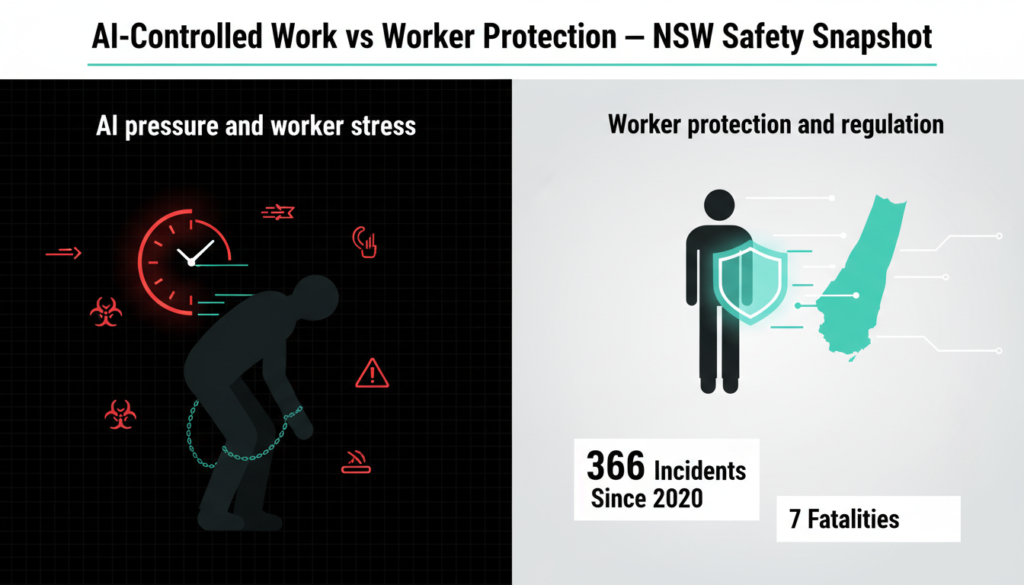

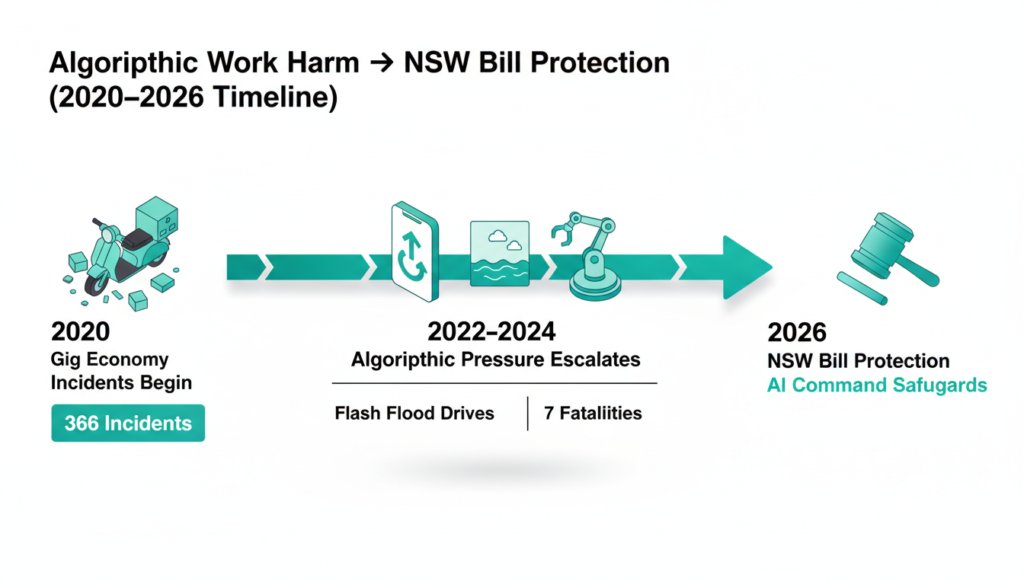

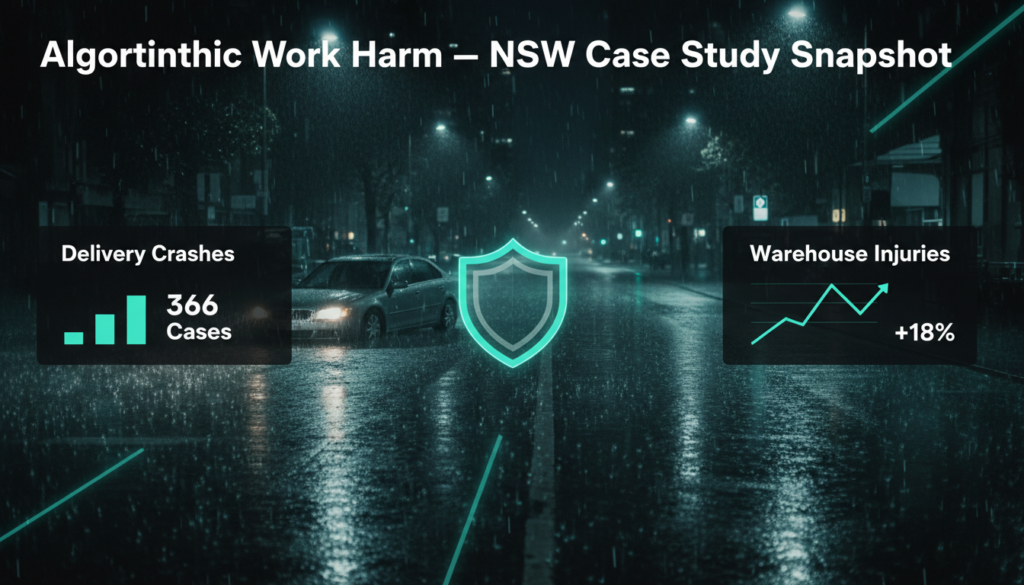

Labor Treasurer Daniel Mookhey laid it out starkly in February 5 Legislative Council debates: rideshare drivers coerced into illegal U-turns or no parking violations by apps desperate for faster ETAs. SafeWork NSW’s 2025 annual report logs 366 incidents involving gig food delivery workers since 2020 including seven fatalities, often from rushed night shifts or poor routing. Warehouse workers aren’t spared; algorithms in centers like those run by Amazon inspired models track “units per hour,” leading to repetitive strain injuries that spiked 18% in NSW logistics from 2022-2025.

A chilling case: a home care gig worker’s app routed her through flash floods in 2024, sweeping her vehicle away and causing severe trauma. SafeWork data shows algorithm complaints surged 25% since 2023, with psychological harms like anxiety from constant surveillance now classified as “foreseeable risks.”

Boosting Union Access to Digital Systems

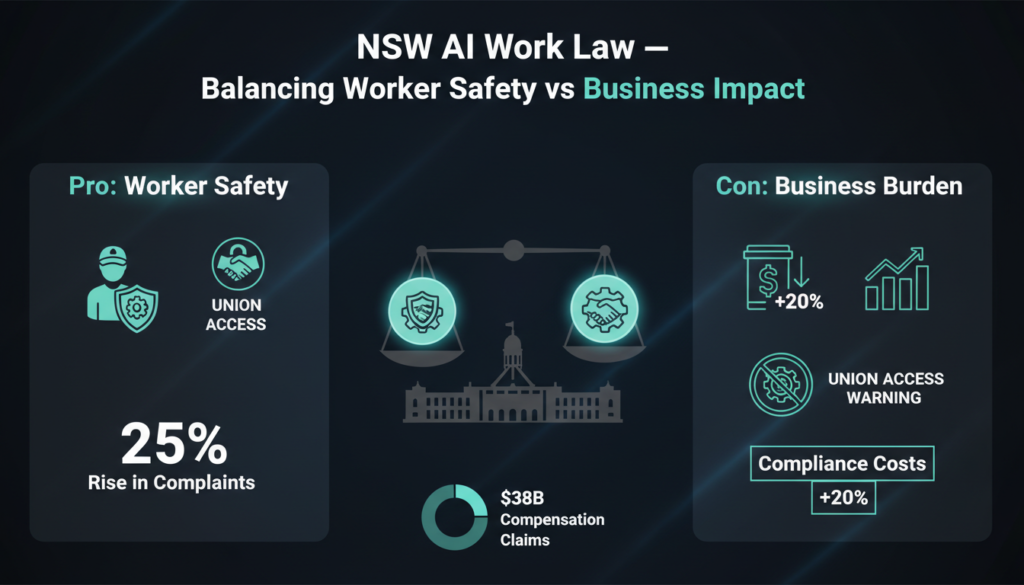

Beyond liability, the bill empowers health and safety permit holders often union safety reps to access and audit digital systems. They can scrutinize performance metrics, GPS logs, and AI decision trails, ensuring transparency without endless bureaucracy.

Premier Chris Minns frames it as a moral imperative: “Rules, oversight, and no more secrets for workers.” It amends the Work Health and Safety Act 2011 to explicitly include “digital work systems,” from gig apps to predictive analytics.

Globally, this echoes the EU AI Act’s “high-risk” category for workplace tools, mandating transparency reports and human overrides since August 2024. The ILO’s 2025 World Employment report ties 8-10% of global occupational injuries to algorithmic monitoring, with developing economies hit hardest. In Australia, a 2025 federal AI safety consultation (via the Department of Industry) flagged similar gaps, but NSW is leading with teeth.

The bill passed the lower house swiftly but faces Upper House scrutiny, adjourned February 5.

Opposition: Too Much Burden on Businesses?

Liberal voices are pushing back hard. Upper House MP Rachel Merton branded it “union overreach,” arguing the broad “digital work system” definition sweeps in every app-using business from cafes with scheduling software to remote teams on Slack. Fines for blocking access? A whopping $66,770 for corporations, $13,310 for sole traders punitive, they say.

“I oppose this wholly union-owned legislation,” Merton declared in parliament. Opposition Leader Kellie Sloane slammed the rush: “Bad laws granting unprecedented IT access. Listen to business first.”

Business lobbies agree. The Australian Chamber of Commerce’s 2025 survey revealed 62% of NSW SMEs fear 15-20% compliance cost hikes, straining small operators already grappling with post pandemic recovery. icare’s 2024 figures peg national workers’ comp at $38 billion, with digital factors in 12% of claims yet critics question if broad audits solve root causes or just empower unions.

Real Cases and Broader Impacts

Consider Uber Eats crashes in Sydney’s wet seasons or Woolworths-style warehouse quotas linked to 2024 injury clusters. A 2025 University of Sydney study found 40% of gig workers experience “algorithmic burnout,” with NSW’s bill aiming to mandate “safety vetoes.”

Federally, Safe Work Australia’s 2026 roadmap eyes national AI duties, inspired by NSW.

Global Lessons for Australia’s AI Future

NSW draws from California’s AB 2023 (requiring bias audits) and the UK’s HSE algorithm pilots, which cut incidents 14% in trials. Experts like Dr. Sarah Chen (UNSW AI ethics lead) note: “This prevents a U.S. style litigation mess.”

Implementation kicks in mid-2026: SafeWork training 500 inspectors, platforms must disclose algorithms.

What’s Next for AI at Work?

If passed, expect app redesigns, “AI safety officers” in firms, and lawsuits testing boundaries. For tech watchers, it’s a bellwether: AI boosts productivity 40% (McKinsey 2025), but unchecked, it erodes human safety. NSW’s gamble could redefine ethical AI Down Under.

Check out more on our blog page now → AI, Tech, Cybersecurity