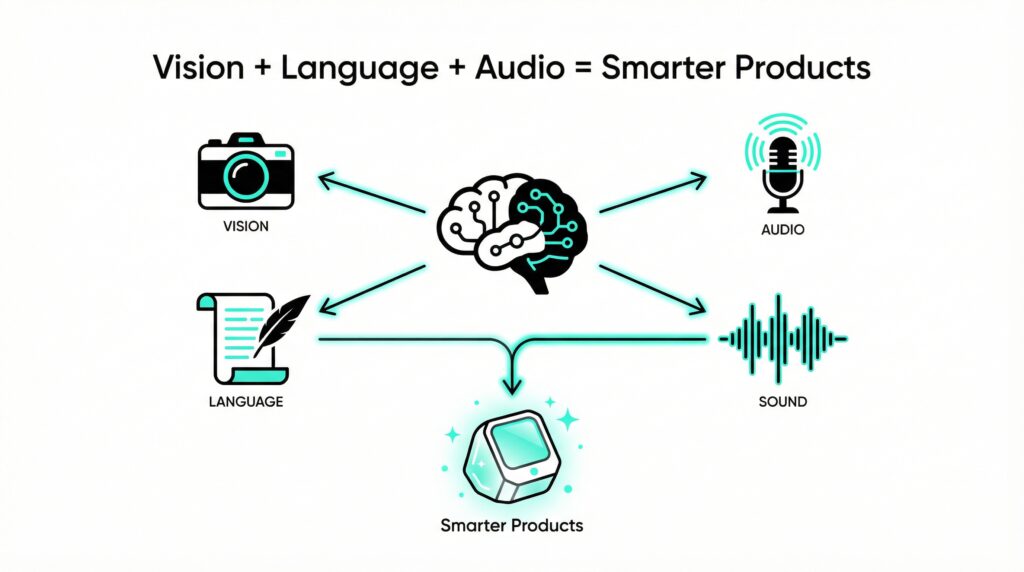

AI has evolved far beyond processing text and numbers alone. Multimodal AI integrates vision, speech, text, and sensory data, allowing systems to see, hear, speak, and reason across multiple inputs at once. In 2026 and beyond, this shift is redefining how digital products are conceived, designed, and delivered. For product teams, Multimodal AI is not just another trend; It fundamentally changes prototyping speed, user personalization, and real time decision making, enabling experiences that feel more intuitive, adaptive, and human than ever before.

Multimodal AI Basics

Traditional AI systems are typically built to process a single data type at a time, whether that’s text, images, or audio. Multimodal AI breaks this limitation by fusing vision, language, sound, and contextual signals into one unified system. Instead of switching between isolated models, it understands and reasons across inputs simultaneously. Imagine an AI that can interpret diagrams, summarize videos, analyze customer reviews, and respond through voice or text in a continuous workflow. This convergence unlocks enormous potential across the product pipeline, accelerating insights, improving user experience, and enabling smarter, more adaptive products.

Why Product Teams Need It Now

From SaaS to eCommerce, multimodal AI delivers real wins:

- Turbocharged Prototyping: Feed in sketches, voice notes, and feedback for instant app prototypes already powering tools like GPT 4o.

- Smarter UX Testing: It scans videos, heatmaps, and comments to spot issues automatically, cutting guesswork.

- Hyper-Personalization: Combine voice, history, and facial cues for tailored experiences at scale.

Top Use Cases Today

Leading teams apply multimodal AI across:

| Use Case | How It Powers Products |

|---|---|

| Product Discovery | Analyzes images, videos, and reviews for insights. |

| Feature Prioritization | Scores ideas from voice feedback and data. |

| Content Generation | Creates visuals and copy from mixed inputs. |

| Customer Support | Handles text, voice, and image queries. |

| Accessibility | Generates alt text, captions, and adaptations. |

Game-Changing Tools for 2026

In creative production, Runway ML has become a go to solution for generating and editing high quality videos directly from text or image prompts. It empowers marketing, media, and product teams to visualize concepts instantly cutting, down production timelines while expanding creative experimentation. Similarly, Figma’s AI transforms design workflows by converting voice commands or text descriptions into functional UI layouts, allowing designers and product managers to collaborate earlier and validate ideas before writing a single line of code. On the research and decision making front, Perplexity AI enhances traditional search by blending visual context with concise, cited answers. This visual enhanced research approach helps teams quickly grasp complex topics, compare options, and ground strategic choices in reliable, multimodal insights, especially valuable for fast moving product and engineering environments. Crucially, most of these platforms offer robust APIs and developer tooling, making integration straightforward. Rather than rebuilding systems from scratch, teams can automate workflows, enrich existing applications with multimodal capabilities, and scale innovation incrementally. The takeaway is clear: experimenting with these tools today doesn’t just improve efficiency, it fundamentally shortens feedback loops, boosts personalization, and accelerates your entire product development cycle in a multimodal first world.

No-Code Multimodal AI for All

No-code platforms turn multimodal AI into “Lego kits” drag and drop blocks for agents without coding.

How to Build One

- Pick your agent (chatbot, assistant, etc.).

- Connect data (docs, images, APIs like Notion or CRMs).

- Mix models for text, images, audio.

- Set logic for queries.

- Deploy to web, app, or site.

Real Example: An eCommerce AI shopping assistant handles photo uploads, voice descriptions, and cart adds built in hours via Flowise.

| Platform Type | Key Capabilities | Highlights |

|---|---|---|

| No-Code | Visual builders, instant deploy | Flowise, Bubble AI extensions |

| Low-Code | Custom logic, API integrations | Voiceflow, n8n with AI nodes |

These tools democratize AI for designers and PMs launch, test, iterate in a day.

Ready to Dive In? Let’s Get Started

Multimodal AI is the new foundation for products. Early adopters will redefine speed and UX. Start small: Test tools in workflows. Integrate APIs. Iterate fast. Stay curious. The future rewards the bold.

Your Roadmap to Multimodal AI Mastery

As we stand in early 2026, multimodal AI isn’t a distant horizon; it’s here, accelerating product development at unprecedented speeds. Teams ignoring it risk obsolescence, while adopters gain a competitive edge through smarter prototypes, intuitive UX, and experiences that feel eerily personal. The data backs this: early integrators report 40-60% faster iteration cycles and 25% higher user satisfaction scores.

To make this actionable, follow this 4-week roadmap:

Week 1: Explore & Experiment – Sign up for free tiers of GPT 4o, Runway ML, and a no code builder like Flowise. Test basic prototypes from your sketches or voice ideas.

Week 2: Integrate Workflows – Use APIs to embed AI into tools like Figma or your CRM. Analyze a recent user session video for UX insights.

Week 3: Build & Test – Launch a simple agent (e.g., support chatbot handling images/text). A/B test it against your current setup and measure engagement.

Week 4: Scale & Iterate – Gather team feedback, refine with multimodal personalization, and document wins for your roadmap.

Overcome common hurdles: Start with small pilots to build buy-in, prioritize data privacy (use compliant models), and upskill via free resources like OpenAI docs or Perplexity searches.

Embrace the Multimodal Revolution

The bold win in product development. Multimodal AI empowers you to dream bigger crafting products that adapt, anticipate, and delight in ways unimagined a year ago. Don’t wait for perfection; prototype boldly, learn relentlessly, and lead the charge.

Ready to transform your pipeline? Connect with me to craft your AI first strategy. The future is multimodal seize it today.